海归学者发起的公益学术平台

分享信息,整合资源

交流学术,偶尔风月

原子间势是原子尺度上研究材料性能的重要基础,其准确性直接确定计算模拟结果。机器学习(ML)技术促进了原子间势的发展,既能保证第一原理方法的准确性,还能兼顾经验势的低成本和并行效率,但是机器学习原子间势(MLIAP)难以实现广泛的可迁移性,不能在与训练期间使用的不同组态中提供一致的准确性能。

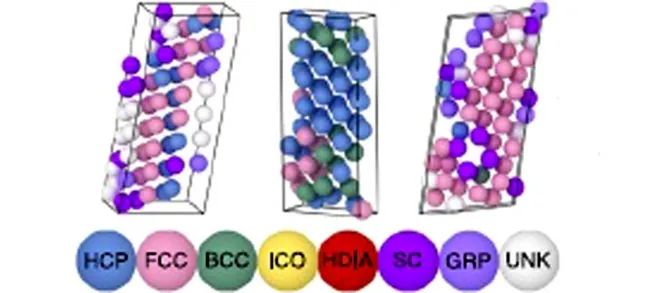

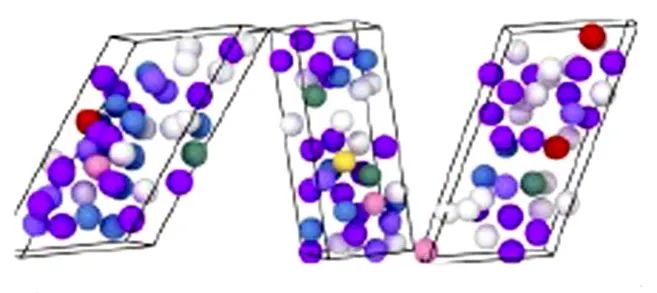

为了平衡原子间势的准确性和可迁移性,来自美国桑迪亚国家实验室的David Montes de Oca Zapiain等研究者,开发了一种可扩展的、与用户无关且数据驱动的熵最大化(EM)协议。他们基于描述符分布的熵优化概念,对训练集生成过程进行改进,以完全自动化的方式生成非常大的(>2*105 组态,>7 *106 原子环境)和不同的钨数据集。然后,在熵优化数据集上训练多个多项式原子间势和神经网络原子间势,在领域内专业知识(DE)的钨数据集上训练一组相应的原子间势。与 DE 训练的模型相比,EM 训练的模型能够一致且准确地捕获大量训练集,以牺牲相对较小的准确性为代价,来获得极为强大的可迁移性,从而有效平衡原子间势的准确性和可迁移性,以避免外推陷阱。基于 EM 的方法本质上是可扩展的、完全自动化的、不依赖于人工输入的。因此,通过生成非常大的多样化设计训练集,可以得到准确且可迁移的MLIAP。与此同时,在传统 DE 数据集上训练的原子间势,在与训练集中包含相似组态时虽然准确性稍高,但在对样本外组态进行评估时,模型的准确性显著下降。该工作提出的这种自动产生多样化训练集的协议,可为数据稀疏的机器学习在表征生成模型的准确性方面提供指导。

该文近期发表于npj Computational Materials 8:189(2022),英文标题与摘要如下,点击左下角“阅读原文”可以自由获取论文PDF。

Training data selection for accuracy and transferability of interatomic potentials

David Montes de Oca Zapiain, Mitchell A. Wood, Nicholas Lubbers, Carlos Z. Pereyra, Aidan P. Thompson & Danny Perez

Advances in machine learning (ML) have enabled the development of interatomic potentials that promise the accuracy of first principles methods and the low-cost, parallel efficiency of empirical potentials. However, ML-based potentials struggle to achieve transferability, i.e., provide consistent accuracy across configurations that differ from those used during training. In order to realize the promise of ML-based potentials, systematic and scalable approaches to generate diverse training sets need to be developed. This work creates a diverse training set for tungsten in an automated manner using an entropy optimization approach. Subsequently, multiple polynomial and neural network potentials are trained on the entropy-optimized dataset. A corresponding set of potentials are trained on an expert-curated dataset for tungsten for comparison. The models trained to the entropy-optimized data exhibited superior transferability compared to the expert-curated models. Furthermore, the models trained to the expert-curated set exhibited a significant decrease in performance when evaluated on out-of-sample configurations.